Following our recent post about procedural assets management, we continue to explore the less documented challenges presented by procedural audio in games. This time, we are looking at testing / debugging procedural models.

Typical audio bugs

With sample-based sound effects, audio bugs usually fall into a few well-known categories. An experienced tester can relatively easily describe them and classify them and an audio programmer will promptly find the root of the issue. A sound effect is simply a sample that is triggered, or several of them either mixed or played sequentially. Sometimes, random sample selection and random settings (volume, pitch etc…) will spice up things a bit – as will do RTPCs – but debugging usually stays relatively simple.

Here are for instance a few typical bugs and some probable causes:

- the sound is not playing: is the bank loaded, is the event triggered in the game, is it a voice management issue (not enough free voices , priority too low), is the distance attenuation correct?

- the volume / panning is wrong: are the settings correct, is there a problem with 3D positioning, mixing group etc…?

- the sound is stuck in looping mode: was it never stopped from code, did the hardware voice never got released?

- the sound is not looping: are the looping points correctly set, is the voice being cut by the voice manager?

- the sound is stuttering: is it a streaming error, are there too many concurrent voices?

- garbage data is played: was the sample correctly encoded / loaded /decoded, did something write over our data?

This is of course not an exhaustive list, but you will notice than in most cases, excepted for freak accidents, the problem is relatively clear: the sound will be playing or not / loop or not / be too loud or have a wrong pitch etc… These issues are relatively easy to track back in a conventional sample playback engine.

Procedural model complexity

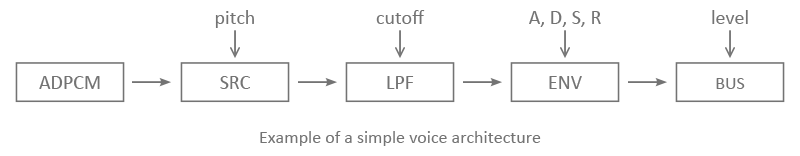

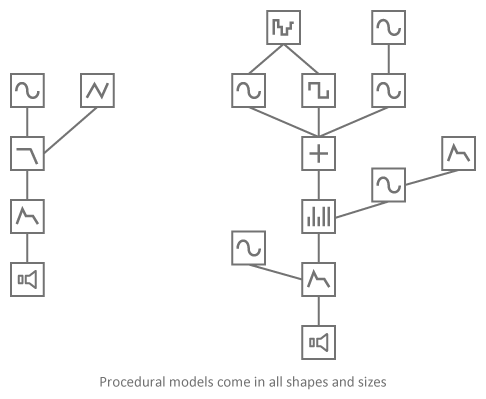

Where sample-based audio engines use a similar playback architecture for all sound effects, procedural audio introduces different models. These models can have different levels of complexity and use various synthesis techniques to generate audio signals. The signal path within a specific model can even vary depending on the parameters coming from the game. For instance, more or less resonating modes could be generated for an impact sound depending on the surface hit, more bandpass filters could be used for a wind sound based on the objects on the scene and so on… The CPU cost is not guaranteed to be constant per voice anymore.

This means that it can be harder to pinpoint the source of procedural audio bugs and that, in addition to issues linked to memory management, loading of the banks, audio level settings and so on, bugs having a more qualitative aspect to them will appear (it sounded a bit “strange”, or a bit brighter etc…).

The fact that procedural audio models can be a lot more integrated with the other game subsystems – that’s one of the benefits of real-time sound generation after all – makes it harder to track the culprit. Is the problem coming from a specific combination of parameters or from the model itself? It is harder to describe the exact conditions under which a bug will occur, and it might be harder to reproduce it. In order to do that, it seems that the tester himself/herself should know about the structure of the model or at least be able to refer to it!

Finally, fixing the issue may not be as simple as replacing a wave file or adjusting a level, especially if it implies modifying the model. A model with a different architecture will likely not have the same CPU cost, which may even bring up new audio problems!

Test automation

Therefore, when prototyping a procedural audio model, it is essential to conduct a thorough examination of its behavior and stability so that issues are detected early. Should a problem arise with a procedural audio model during QA, it will indubitably be harder to detect its cause and to fix the issue.

At any given time, any combination of values inside (and sometimes outside!) the valid ranges could be sent to the parameters. It is important to have a way to simulate these simultaneous parameter changes. MIDI or OSC control surfaces and software are a good way to test a model, either with sliders, knobs, 2D panes or more evolved controllers.

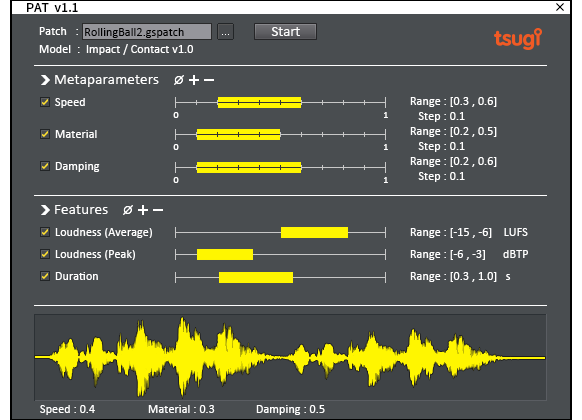

However, even in that case, it is hard if not impossible to try all combinations of parameters. To deal with this situation, we developed a simple procedural audio tester, or PAT. PAT iteratively assigns all possible combinations of values to the model parameters, generate the corresponding sounds and run audio analyses to detect if the results are as expected.

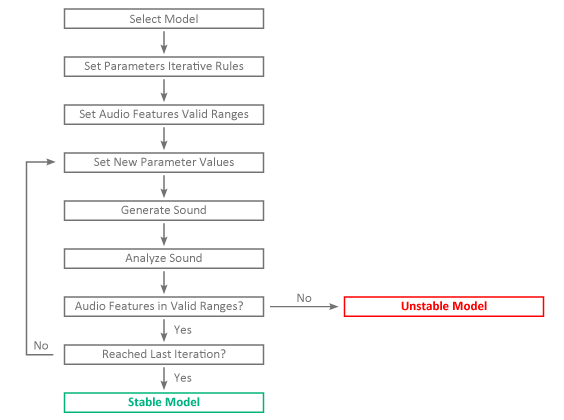

The following graph describes the basic flow of the program.

First, we select our model and, for each parameter, the range in which it should be tested. Here we are talking of course about the parameters that are exposed to the game engine. We also set an incremental step that will be used to calculate the successive parameter values. [Actually, the tests are first conducted using multiples of the incremental step in order to find obvious issues faster and then we reduce the increments to focus on smaller changes].

Then, we select what audio features should be extracted of the generated sound and in what range they should fall. For example, we may want to make sure that the peak level stays within a given range, that the duration stays constant, that the model does not generate partials over a given frequency or that there is no transient similar to a click.

The main loop simply consists in setting the new values to the parameters (based on the selected ranges and increment values), generating the corresponding sound and performing the sound analyses. If the audio features are found to be out of their respective valid ranges, the model is not performing well and an error report is sent with the details about the current parameter values and the analysis results. If we reached the last iteration without any problem, the model is deemed stable. Note that PAT is also tracking spikes in CPU cost and memory consumption.

An upcoming version will allow for the parameters to change during the generation of the sound itself as it can trigger other issues. For example, abrupt changes between very different values can sometimes create glitches.

What about you, how are you testing your procedural audio models? Let us know or contact us to learn more about PAT and our consulting services in procedural audio.